Gesture is often assumed to be universal. But the same movement can mean entirely different things depending on who is reading it, and from where.

My MFA thesis project explores an interactive installation that uses real-time hand tracking to detect participants' gestures and project a rotating series of textual interpretations onto a fifteen-foot gallery wall. The system does not tell you what your gesture means. It shows you what it could mean.

The Research

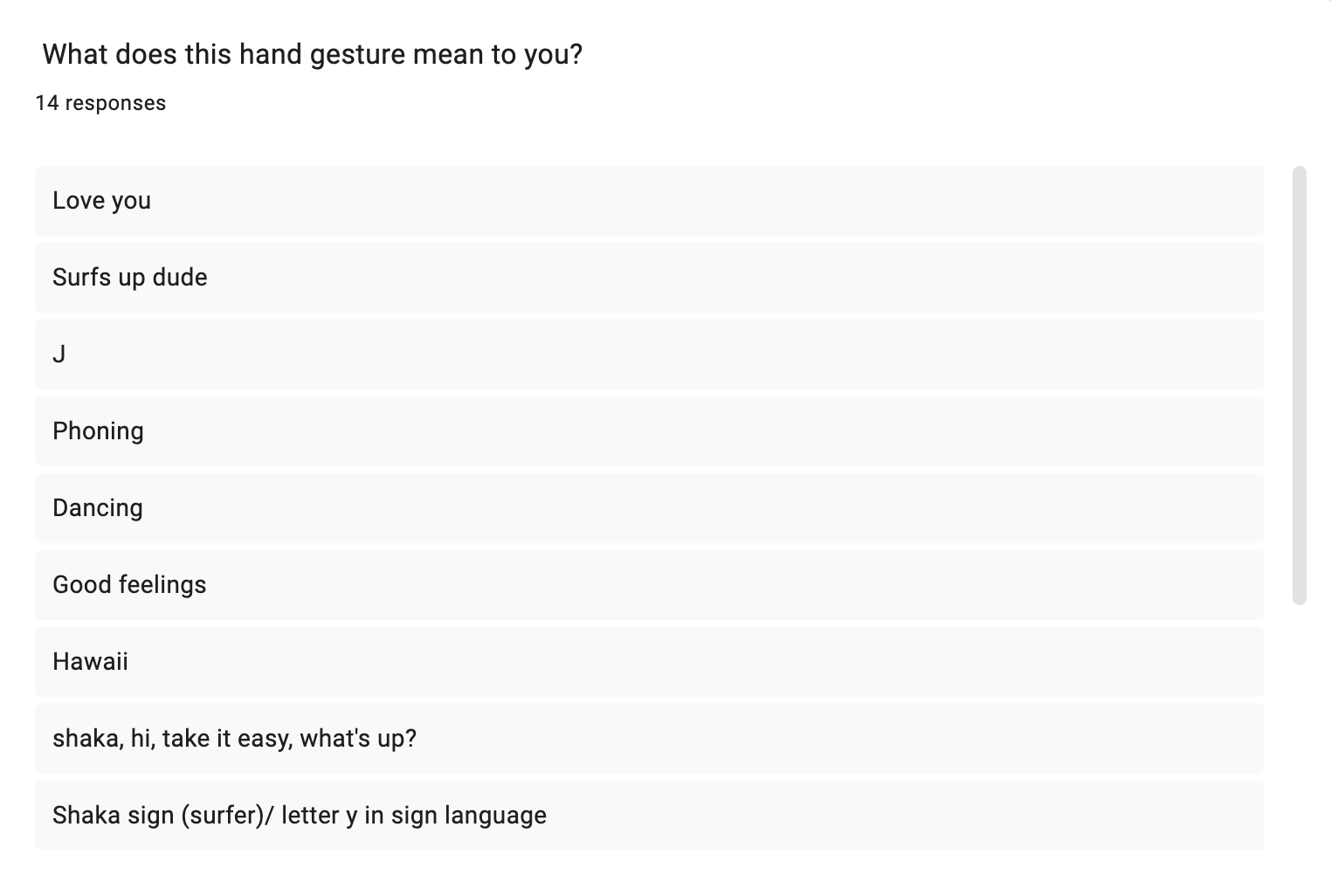

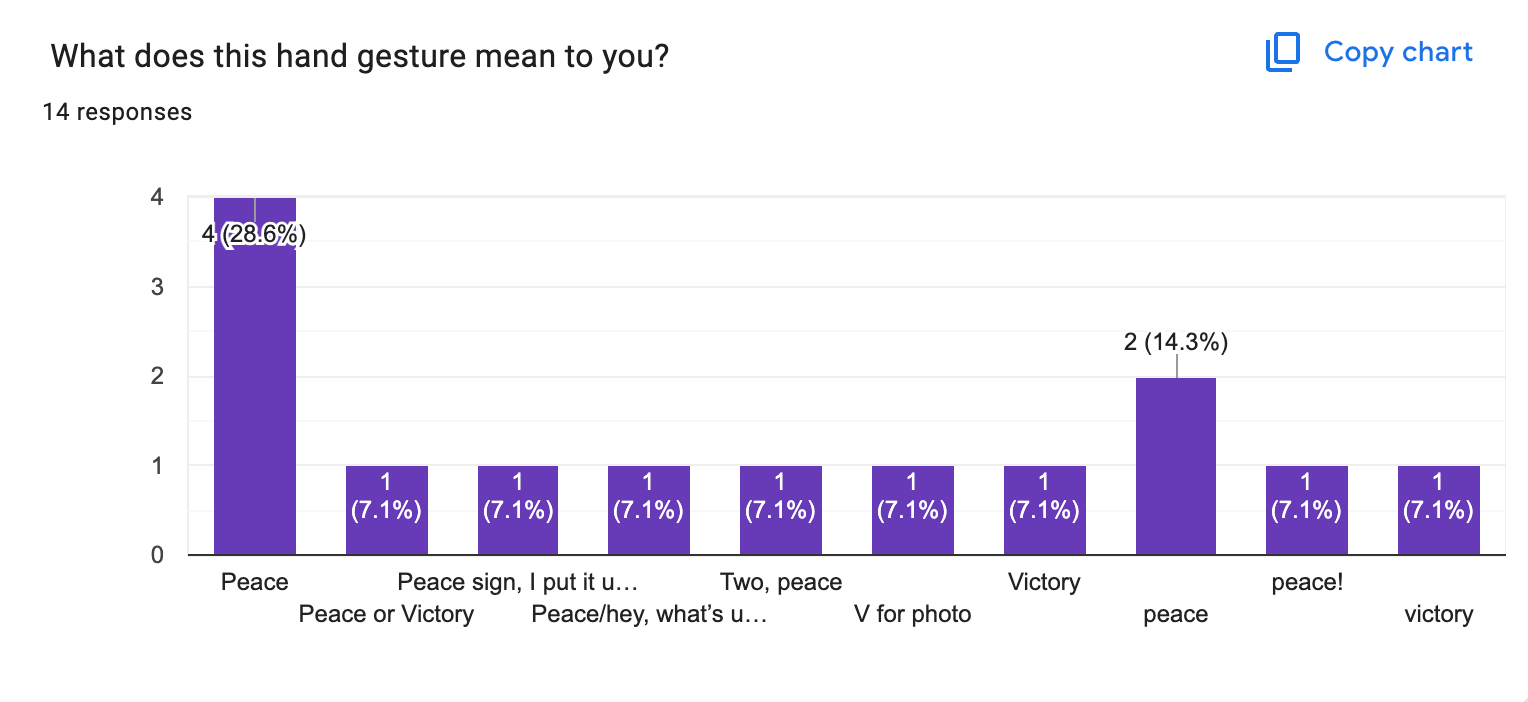

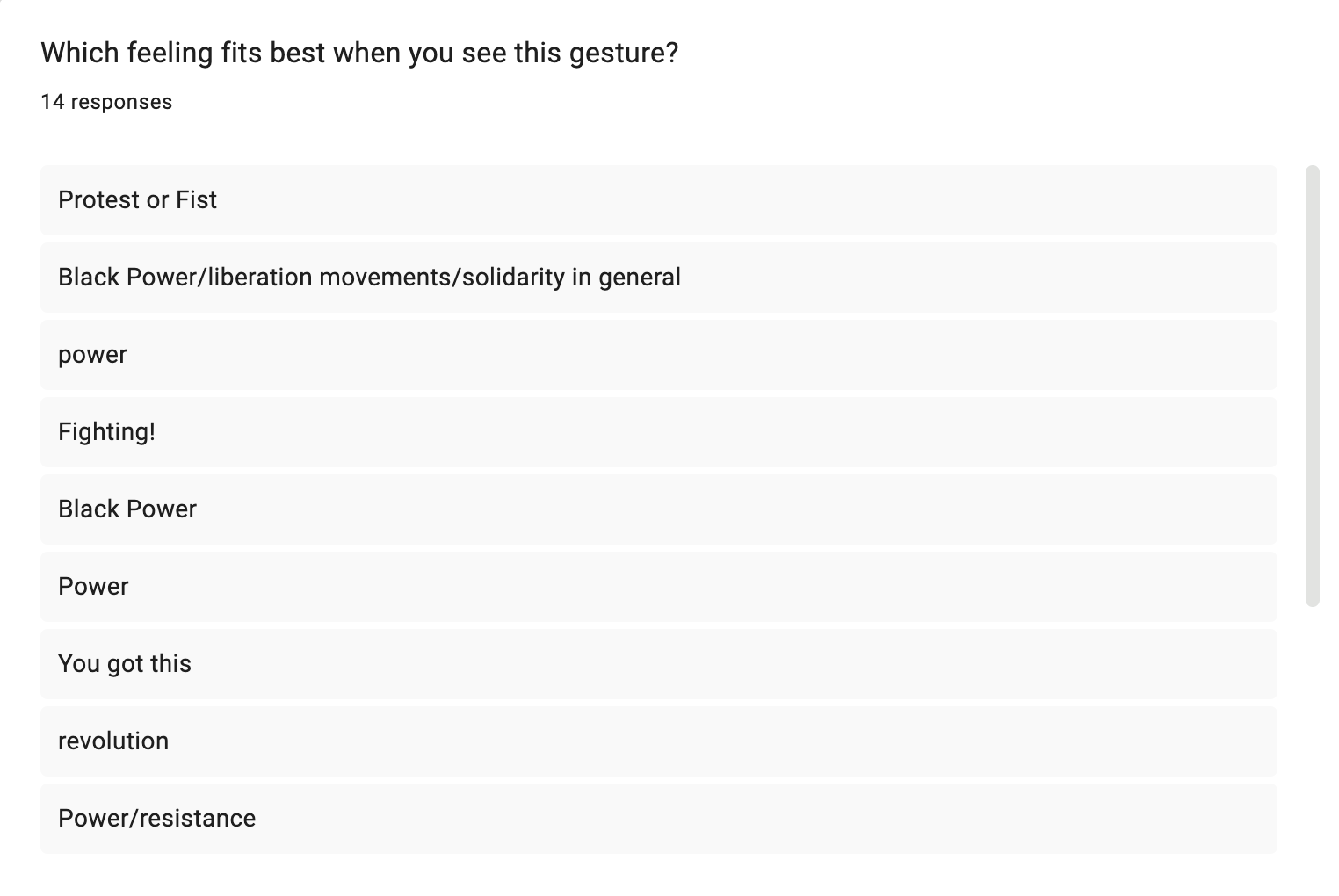

To build the content of the installation, I conducted a survey asking participants from diverse cultural and generational backgrounds a single open-ended question: What does this hand gesture mean to you? The results were immediate and telling. A gesture as apparently legible as the index-and-pinky extension generated at least three distinct readings—rock culture, ASL for "I love you," and a symbol of evil or demonic reference—depending entirely on the interpreter's frame of reference.

The survey confirmed what the project set out to explore: gestures are not fixed signs. They are interpreted signs, and interpretation is always shaped by the body, the culture, and the history of the person doing the reading.

The Research

The space is spare. A camera is mounted above the interaction zone. A white vinyl ring on the floor marks where participants stand—open on one side, inviting entry rather than enforcing containment. Across from the projection wall, a thermographic display renders the active participant's hand in heat-mapped color, abstracting the gesture into biological data. To the right, a grid monitor loops a set of pre-recorded gestures, functioning as both reference and archive.

No instructions are posted. Participants enter the ring, raise their hands, and discover the system through use.

The Technical System

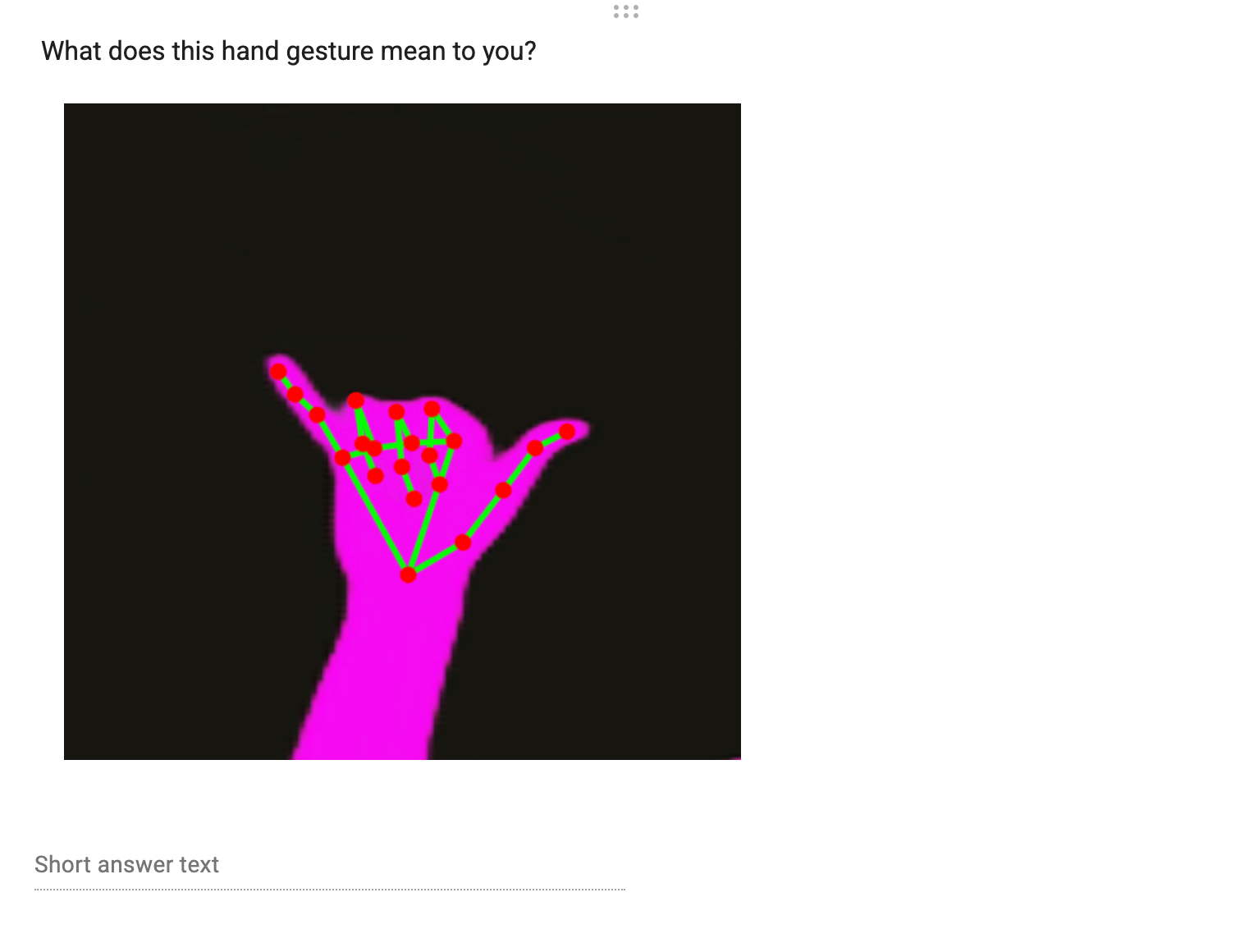

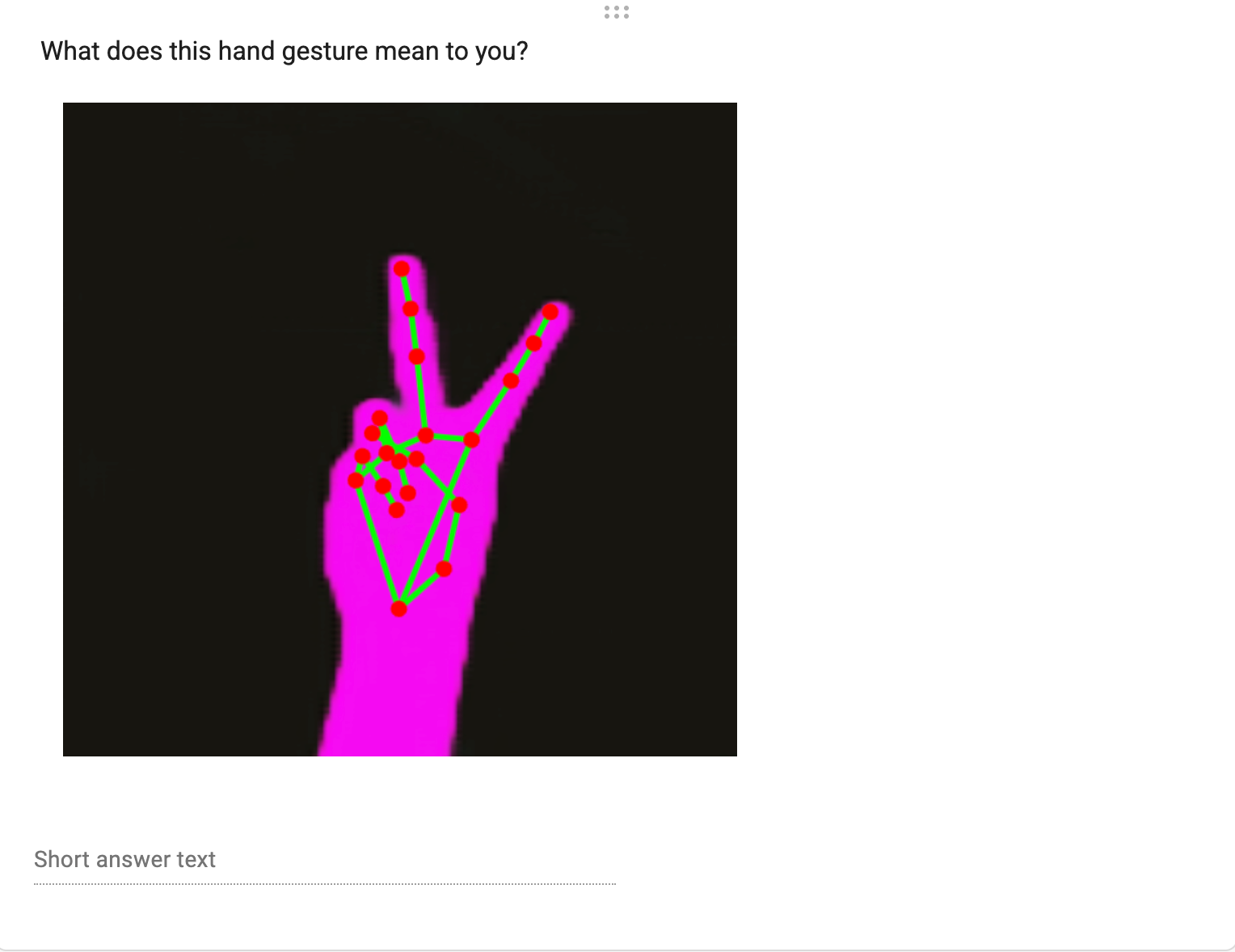

The gesture recognition pipeline is built in TouchDesigner using Google's MediaPipe hand tracking library. The system identifies 21 skeletal landmarks across the hand in real time, distance from fingertip to palm, joint angles, finger spread. When a set of conditions is met, a gesture is classified and the text output system is triggered.

The phrases are drawn from survey responses and cross-cultural gesture research. They cycle and rotate, so no single interpretation is presented as final. The system is designed for multiplicity, not resolution.

At the Exhibition

What happened in practice exceeded the design. Participants entered the ring without prompting, began experimenting, and quickly moved from gesture recognition to something closer to performance, exaggerating their hands, holding configurations in place, moving rhythmically. The space became a site of physical play.

More importantly, people became newly conscious of their own hands. What a hand does in ordinary life goes unnoticed. In the installation, that habitual movement became something to examine

Its shapes, its possible readings, the cultural weight it carries without the person ever having chosen to put it there.

Theoretical

The project draws on John Durham Peters' Speaking into the Air (1999), which argues that misunderstanding is not a failure of communication but a structural condition of it. We never fully access another person's interior world. The installation takes this as a generative constraint rather than a problem to solve, building a system precise enough to recognize gesture, but deliberately open in how it interprets meaning.

Built with MediaPipe, TouchDesigner · Exhibited at Mason Gross School of the Arts, Rutgers University, Spring 2026